The Flaw in Yuval Noah Harari's Scientific Dystopia

Those who would become gods must first go crazy

A few weeks ago I read Homo Deus: A Brief History of Tomorrow by Yuval Noah Harari. Originally published in 2016, the book was bundled with the author’s previous offering, 2014’s Sapiens: A Brief History of Humankind, and with a small volume of Bonus Content collecting some of Harari’s more recent essays. The three paperbacks were packed together in a slipcase, the back of which boasts praise from Barack Obama, Bill Gates, and Mark Zuckerberg.

The set also includes a bookmark that announces itself as “A BOOKMARK YOU MAY NOT NEED”, beneath a quote from Bill Gates saying “You’ll have a hard time putting it down.” For good measure the bookmark also presents the descriptor “Essential reading” from the New York Times.

I purchased this product from Ollie’s Bargain Outlet, where it was priced at $9.99, marked down from $49.99—and I had a 30%-off coupon. Though I have yet to read Sapiens, I would consider Homo Deus well worth the money spent. These are luxury items, weighty both literally and metaphorically. They are meant to make consumers feel smart for owning them. If anything, these books are too high-quality in their production: the pages are the glossiest pieces of paper I’ve ever touched, and they make my ink smudge and run when I mark up the margins.

Who he is and what he serves

From the information above, you would be correct in guessing that Harari always has the correct opinions on every matter of public debate. Irreproachably, throughout the book he is careful always to criticize Nazis and Russians when giving examples of historical monstrosities. He is against the excesses of capitalism and communism. At the same time, like any reasonable person, he acknowledges the higher standards of living made possible by Our Free Market. He likewise recognizes the sacred safety nets erected in response to socialistic pressures. You see, he is very evenhanded.

Harari is of course on friendly terms with all the right people, all the good people, all the wise worldly people capable of engaging in debate that sounds very sophisticated to people who fancy themselves sophisticated. Actually, most of them are pretty smart. An advisor to Klaus Schwab, Harari functions as the court philosopher of the World Economic Forum. If he’s a villain, he’s the Desaad to Schwab’s Darkseid, perhaps.

But I do not think Harari is a villain.

Yes, it is true that many of Homo Deus’s 400+ pages argue for the goodness and inevitability of a micromanaged totalitarian dictatorship. Humanity as we know it will mercifully winnow away to nothingness, as technology usurps and abolishes the very concepts of sovereignty and free will. Many of Harari’s sentiments may as well be lines from Agent Smith in The Matrix films. “Homo sapiens is an obsolete algorithm,” the author proclaims (386-87).

But I do not think Harari is a villain. Some of his rhetoric seems to be written for effect. (For example, he tells his reader: “record everything you do, and put it online […] then the great algorithms of the Internet-of-All-Things will tell you whom to marry” (398)). Moreover, more often than not his arguments include a disclamatory coda, stressing that the sicky-sweet technological nightmare he’s just outlined is simply food for thought or what might happen; and either way we should think about it, try to decide if it’s what we want or not, and if it’s not what we want then we should see what, if anything, we could do about it.

We may not have a choice in the matter. The elite may be destined to bond with computers and exterminate the rest of us with ease. But Harari is at least telling us what, in his considered opinion, will probably happen. If any of us are ever going to try to change the future, we’d at least need an understanding of where things already seem to be going, and this is what Homo Deus attempts to provide.

It’s all in the algorithm

The most original idea conveyed in the book—and it is not Harari’s own idea, though he’s probably done the most to popularize it—is the notion of everything as algorithms. Computer data contain algorithms… the stock market is full of algorithms… the universe is a giant algorithm… and human life and behavior are also algorithms. All of the algorithms fit together, into a giant algorithm.

Harari’s directive is simply to submit to the algorithms: “[A]llow Google and Facebook to read all your emails, monitor all your chats and messages, and keep a record of all your Likes and clicks” (398). Taken out of context, this advice might seem overdramatic, or even facetious, but Harari does not seem to have intended it as such. If one could press him on this issue in person, he would very possibly explain that various data-harvesting programs are collecting almost all of our input anyway, whether we want them to or not. His provocative written statements simply prepare us for what is already happening—and for what will only keep happening more and more.

And what is the point of this mass collection of every single detail possible? Well, to help us, of course:

Suppose on an average day the words ‘headache’, ‘fever’, ‘nausea’ and ‘sneezing’ appear 100,000 times in London emails and searches. If today the Google algorithm notices they appear 300,000 times, then bingo! We have a flu epidemic. There is no need to wait till Mary goes to her doctor. On the very first morning she woke up feeling a bit unwell and before going to work she emailed a colleague, ‘I have a headache, but I’ll be there.’ That’s all Google needs.

However, for Google to work its magic Mary must allow Google not only to read her messages, but also to share the information with the health authorities. (340)

Behind this intensive caring for the health of citizen units, one soon realizes that the greater benefits of data-collection will be for the system itself, so that the system will know how to best rearrange and allocate its own resources for its own optimal operations. Not only will all this technological perfection grant us the long-promised treat of exploring the universe, but the concomitant scientific breakthroughs will also be able to convert humans into gods. Hence the title, Homo Deus. On the way to complete domination of time and space, the beneficent computer codes may intervene to save us from a deadly common cold. No detail is too small or large for their gracious guidance. As Harari explains, these same “great algorithms of the Internet-of-All-Things” could also tell us when it’s the correct moment “to start a war” (398).

But as I have said, Harari is not a villain; he is at times humble, and he is circumspect. He merely presents the current information and its consequences to the best of his capacity. Everything is an algorithm, including each of us, and smaller algorithms simply must simply yield to or merge with larger ones. That is how he sees matters, but he is not totally obstinate in this thinking. Toward the end he even admits that “Maybe […] in twenty years […] we’ll discover that organisms aren’t algorithms after all” (399). A disarming statement from one of our few contemporary intellectuals brave enough to admit: Maybe I’m wrong.

Obey the inevitable everything

Harari is basically right. Everyone with a clue knows where all of this is going, more or less. You don’t need to read Ellul (though that would be best) or (wince) Kaczynski; it is simply in the air that the intrusion of technology into human experience knows no bounds. One way or the other, technology always wins. For every tree that anti-logging protestors save, a dozen apps appear on the protestors’ phones. The most correct measure of modern processes is not CO2 levels or gas prices: the best metric is artificiality. We have an increasing artificiality, internally and externally, on our planet, in our world, and in our consciousnesses and bodies.

Harari senses and accepts that the escalation of monitoring technologies will proceed unabated. Everything and everyone will be tracked and traced, and all data will enter into algorithms that will in turn spit out directives for human beings to obey.

You should eat more vitamin-C-containing fruit because your levels are low.

You are not allowed to drive your motorcycle today because you have used up your weekly allotment of frivolous energy.

We have taken the liberty of cashing out all your holdings of a certain company’s stock because their CEO has been naughty.

We are no longer allowing you to exchange text messages with the person you went on a date with two weeks ago, because based on all sorts of psychological profiling we know that further contact would be inauspicious.

It is not even necessary to collect literally every data point for helpful programs like these to be implemented. With human encouragement, we are already seeing that the algorithms will simply work with whatever data they have and do as much as they can to direct our behavior.

The algorithms are always growing; they are always getting more data; and various organizations are already giving them increasing power over human activity. Harari simply, courageously notes that there’s no reason for these trends not to continue.

Eventually the algorithms will have more agency than human beings. We may be there already, in fact. On a broader level, I would say that the autonomy of technological development has long surpassed the ability of humans to direct it. Human leadership hasn’t really been in charge of our destiny since the Second World War, at the latest. Technology uses us as its tools now, not the other way around. But Harari focuses not on technology as a whole so much as on algorithms specifically. And while the concept of algorithm obviously maps onto the Ellulian technological system quite well, algorithm may also lay claim to the more natural processes of life—and of the universe itself as a big algorithm in the sky.

The NPC decoded

What is the colloquial version of “Submit to the algorithm”? “Go with the flow.” Note the commonality here, the insinuation that we should not think. Don’t worry about Meta; don’t second-guess Google; just give them your info because it’s easier. If everyone else is doing it, you should just do it too. Go with the flow, the flow of information and data. Don’t make waves; ride along with the waves that are already there, created by forces much larger than you. Make it easier for the algorithms to capture you.

Harari’s descriptions of human algorithms recall the NPC memes of recent years. What is an NPC person? Someone who goes along with the program—someone who has fully submitted to the algorithm, who does what the algorithms tell them to do. And why have they done what the techno-informed, info-infused social cues told them to do? Either for their own good or for the sake of their personal health… Or for someone else’s good, or for someone else’s health… For society as a whole, or… Just for the good of the system, y’know?! That’s what they say; that’s what they think; and the data backs them up.

I often dislike NPC memes on an aesthetic level, but of course they have their functions. They have been accompanied by various pieces of evidence, mostly anecdotal, suggesting that many people do not have internal monologues or cannot visualize things in their minds. This reinforces the belief of the memers that large portions of their fellow humanoids lack basic consciousness or standard mental capabilities.

Harari’s book touches on this theme as well, and as you might expect he tends to take the side of the NPCs. After admitting that consciousness is indeed a real thing, Harari wonders whether or not “it fulfils [any] biological function”—and, if not, then “consciousness may be a kind of mental pollution” (117). Consciousness, you see, could impede the algorithm. After all, “no known algorithm requires consciousness in order to function” (122).

Previous conceptual models of the perfect citizenry mercifully allowed people to retain sentience. The consumeristic “mass man” of 20th-century America still had to know what he wanted to buy and ostensibly decide who he wanted to vote for. Self-knowledge of his desires was no impediment to the capitalistic system serving him and growing from his efforts and monetary expenditures. The communistic “Soviet man”, an ideal of the USSR, was not to be a thoughtless drone: he was to have a clear understanding of his place in the world and how the Marxist paradise was to function. This was to help the comrade better appreciate his society. Knowing that it was good and good for him, the new Soviet man could then explain his superiority over the bourgeoisie in perfect academese.

The NPC by contrast must simply follow the algorithm—there is no knowing, no thinking or discernment required. It does no good for him to wonder how his actions contribute to larger turns of the gyre. Maybe he shouldn’t even suspect the existence of larger machinations, much less wonder which avatars, brands, nations, interests or philosophies they might serve.

In Harari’s world, the masses need only thoughtlessly input as much data as possible into the computerized system at large. The only modicum of intellectual effort left will go to the specialists—and thanks to automation fewer and fewer of them will be needed as posthuman reality comes into view. While the algorithm still suffers them to exist, these specialists serve the legacy function of providing a human face for the system’s directives. These credentialed experts in rule-following will take your personal info and “analyse the results according to well-known statistical databases,” before making simple decisions such as “what medicines to give you or what further tests to run” (161).

Just give these impressive people your data and do what they say. Thinking or questioning anything could get in the way or slow down the algorithm. Any hesitation on your part is actually extremely selfish because it puts some amount of stress on the entire system and everyone it serves. What if your slowness in ticking “OK” on a terms-of-service agreement is proven to have caused a 0.003-second delay in the algorithms detecting a somewhat imminent famine in Pakistan? What if that statistically results in even 0.1 more deaths? What should we do with you then?

Consciousness obsolescence

Other passages of Homo Deus make a distinction between intelligence and consciousness—and this is truly a line of thought worth pursuing. Thwarting expectations, perhaps, Harari contends that computers simply cannot become conscious. Artificial intelligence differs greatly from natural consciousness, after all, and the latter may prove impossible to generate with digital codes—especially if we don’t even know what consciousness is exactly. Harari admits that to date “science knows surprisingly little about mind and consciousness” (108). But while we cannot create conscious computer programs, we can create self-learning computer programs that outpace the intellectual capacities of human beings. On more and more fronts every day, computers are learning how to do better and faster mental work than human beings can do in their heads, with the only difference being that the computers are not conscious. Does this make humanity obsolete? Homo Deus invites us to argue for our continued viability, if we can. Yes, we’re conscious—but so what? Explain why that even matters.

As I have repeatedly stressed, Harari is calling our attention to the real questions and challenges that humanity will have to face: “Suppose non-conscious algorithms could eventually outperform conscious intelligence in all known data-processing tasks—what, if anything, would be lost by replacing conscious intelligence with superior non-conscious algorithms?” (399). It’s an honest question—What is the value of consciousness?—and one that doesn’t have an easy answer beyond old sentimental claptrap that has already proven ineffective. “All You Need Is Love” didn’t save the world. For well over half a century, pop culture shat out rapid-fire regurgitations of maudlin humanism, to no avail. There was hero modeling for several generations, and yet none of it proved effective in battling the techno-totalitarian drift. Harari knows the situation and recounts it with some relish:

In the climactic scene of many Hollywood science-fiction movies, humans face an alien invasion fleet, an army of rebellious robots or an all-knowing super-computer that intends to obliterate them. Humanity seems doomed. But at the very last moment, against all odds, humanity triumphs thanks to something that the aliens, the robots and the super-computers didn’t suspect and cannot fathom: love. The hero, who up till now has been easily manipulated by the super-computer and riddled with bullets by the evil robots, is inspired by his sweetheart to make a completely unexpected move that turns the tables on the thunderstruck Matrix. Dataism finds such scenarios utterly ridiculous. ‘Come on,’ it admonishes the Hollywood screenwriters, ‘is that all you could come up with? Love? And not even some platonic cosmic love, but the carnal attraction between two mammals? Do you really think that an all-knowing super-computer or aliens who contrived to conquer the entire galaxy would be dumbfounded by a hormonal rush?’ (394-95)

The authenticity defense

Leaving aside these failed appeals to emotions and emotionality, the concept of authenticity may be humanity’s ideological bulwark against technopoly. Because we have consciousness and computers do not, human experiences (and their resultant decisions) can be said to possess an authenticity that algorithms and A.I. will always lack. A robot who smiles at a sunny day would smile because his programming told him to smile. Even if the robot’s sensors accurately receive the light and heat of the sun, the response would be a mimic of pleasure and a result of operant conditioning—not an authentic reaction to the universe. And Harari would agree, of course, that the robot lacks consciousness, but he would ask why that even matters. It matters because our consciousness in a sense consecrates our experience of the universe, to extend T.S. Eliot, “When the tongues of flames are in-folded / Into the crowned knot of fire / And the fire and the rose are one”—all under the seal of authenticity. Authentic experiences are superior to inauthentic ones, and consciousness is a necessary precondition for authenticity.

Harari, however—and he is far from alone in this—all but rejects the very concept of authenticity, suggesting that it is a vestigial holdover from the previous, now-concluded era. “The liberal belief in individualism”—a misplaced conviction—merely caused people to spout subjective, humanistic illusions, such as: “I am an in-dividual—that is, I have a single essence that cannot be divided […] [D]eep within myself [is] a clear and single inner voice, which is my authentic self. […] My authentic self is completely free” (333). According to Homo Deus, these are to be understood as quaint, pathetic old notions within the current setting of overdetermined technical progress.

How, then, could authenticity serve as a stable building block of reality when, as per Harari, human consciousness itself possesses no authentic outlook or voice? “The single authentic self is as real as the eternal soul, Santa Claus and the Easter Bunny,” he explains. “Humans aren’t individuals. They are ‘dividuals’” (293). The argument here, as you might expect, proceeds from a materialist outlook that stresses how the human “body is made up of approximately 37 trillion cells” (292), and each of our thoughts results from specific neuron-firings in the brain, which are actually triggered by external stimuli outside our control. Moreover, our behavior and opinions can be broken down into patterns, internal and external, predicted by algorithms, personal and universal. And therefore once again liberalism (classical or otherwise) simply must fall defenseless before a new technologically augmented scientism: There is no soul; there is no self; there certainly is no authentic self. There is no such thing as authenticity.

This last conjecture is not Harari’s but is rather an extrapolation of his thought. I have written the previous three paragraphs in order to rebut the consequence of his thinking, to argue for the existence of authenticity.

What, then, is authenticity? It is the absence of artificiality. It is the more direct perception and experience of reality. Usually, of course, authenticity accords with nature, as distinct from situations orchestrated by technology. An in-person relationship between two housemates is more authentic than an online relationship between two people catfishing each other. Wrestling is a more authentic sport than baseball, while baseball is a more authentic sport than car racing: simple wrestling does not need to employ any technological augmentation, whereas baseball demands the manufacture and use of various equipment, and car racing is predicated on the auto industry.

Note, of course, that the more authentic experience may not be the preferable one. Walking twenty miles to a grocery store, for example, would be a more authentic experience than riding a bus there. Traveling on foot would certainly provide you with a better perception of your surroundings, the weather, the neighborhoods, their relations to each other, with each other, and with you. You would be conscious of these elements and experience something of their inner meaning in ways that a cataloging robot would not and could not. But valuing authenticity as a concept doesn’t demand that we always choose the more authentic route in life. Don’t walk twenty miles to go to a store.

Nonetheless, authenticity exists as a valid criterion with which to evaluate behaviors, individuals, societies and other constructs. When taking stock of the situation as Harari wants us to do, evaluating the varied degrees of authenticity across the contemporary landscape would certainly inform our opinions. (However much free will we have—or don’t have—when making judgments based on these opinions is another matter entirely.) It may be that authenticity, like consciousness, cannot be wholly subsumed into or reproduced by the algorithms, and thus in authenticity we may have hit upon another uniquely human quality. The defensive value of that quality—if any—in the face of the aforementioned “all-knowing super-computer” remains an open question for now.

Toward better killers and more sustainable traumas

While we wait to find out whether or not the algorithms can completely eradicate the human race, Harari suggests that we may as well use new technologies and medicines to improve our capabilities and ease our pain. Curiously, the favored examples of new gadgets and drugs that Harari provides, all throughout the book, indicate his particular interest in things that allow people to become better at killing each other and having less guilt afterwards.

For instance, he refers to a “transcranial helmet” that “produces weak electromagnetic fields and directs them towards specific brain areas, thereby stimulating or inhibiting select brain activities” (290). Designed for soldiers, this device is apparently already invented and in use, at least in experimental capacities. Its purpose is to cause the wearer to become immune to otherwise overwhelming stimuli, such as explosions or gunfire on the battlefield, while also gaining an intense focus and ability to take out enemy targets with ease. A civilian journalist testing out the helmet in a simulated environment “began picking off the virtual terrorists one by one, as coolly and methodically as if she were Rambo or Clint Eastwood” (290). Without the augmentation, however, she experienced sensory overload and said that “panic and incompetence” rendered her useless (290).

But why limit these techniques by restricting them to temporary headwear? Why not fit similar but smaller devices inside the head? And what about the guilt that some people might feel after ending others’ lives for dubious political reasons? To those ends, Harari relates how

The US military has recently initiated experiments on implanting computer chips in people’s brains, hoping to use this method to treat soldiers suffering from post-traumatic stress disorder. In Hadassah Hospital in Jerusalem, doctors have pioneered a novel treatment for patients suffering from acute depression. They implant electrodes into the patient’s brain, and wire them to a minuscule computer implanted in the patient’s chest. On receiving a command from the computer, the electrodes transmit weak electric currents that paralyse the brain area responsible for the depression. The treatment does not always succeed, but in some cases patients reported that the feeling of dark emptiness that tormented them throughout their lives disappeared as if by magic. (289)

As for medication, Harari meticulously notes how various drugs are already used to mollify and modify human beings. More and more people take more and more pills for more and more reasons. As anxieties of all sorts increase, the purview of mind-altering medicines can only continue to expand as demand grows. Importantly, the real reason for this is so the technological system itself can function better: the quick-fix easement of persons undergoing trauma or discomfort is simply a means to an end. Psychotropic drugs have become popular because they help the system; they help the system because, in the short-term at least, they help people function better within the system, to the system’s ends. Even misdiagnoses—or drugs that eventually have negative effects on people’s functionality—also prompt more research and funding for the medical industry.

Harari provides the crucial example of Ritalin being prescribed to school children en masse and how evolving scientific techniques have shifted the paradigm: “People have been quarrelling about education methods for thousands of years. […] Yet hitherto everybody still agreed on one thing: in order to improve education, we need to change the schools. Today, for the first time in history, at least some people think it would be more efficient to change the pupils’ biochemistry” (40). Yes, some people—more and more all the time—take it for granted that the students’ brains must yield to the ever-expanding bureaucratic architecture and the social environment of distraction. We must not overhaul (and possibly diminish the amount of) public schooling. We must not remove the always multiplying video screens from the lives of children so they can have an easier time concentrating. No, in the current situation we can scarcely even consider these things. Instead, rather than dial things back to a previous level of artificiality, we would rather chemically alter the brains of children on a very wide scale. This is simply how things have played out.

Likewise, rather than not fight wars whose rationales remain murky and whose outcomes benefit few if any, we have volunteer armies whose experiences demand mass-drugging while on duty and long afterwards. As Harari reports: “12 per cent of American soldiers in Iraq and 17 per cent of American soldiers in Afghanistan took either sleeping pills or antidepressants to help them deal with the pressure and distress of war” (40). Long, bizarre, largely pointless wars are made more possible, you see, because medications numb the brains of those fighting and killing and dying for no discernible reason.

With our every choice we may think we’re helping ourselves and assuaging our pain. We may believe we are engaging with new electronics and new powders and potions that will help us become the best versions of ourselves possible. We think it’s all for us, a godsend in response to our most personal needs, so we can then get along in life and become more autonomous. But as honest Harari says:

Technological progress has a very different agenda. It doesn’t want to listen to our inner voices. It wants to control them. Once we understand the biochemical system producing all these voices, we can play with the switches, turn up the volume here, lower it there, and make life much more easy and comfortable. We’ll give Ritalin to the distracted lawyer, Prozac to the guilty soldier and Cipralex to the dissatisfied wife. And that’s just the beginning. (369)

Harari is not cheerleading these developments so much as he is noting what has happened and what will continue to happen. A distracted lawyer or dissatisfied wife might in some way drop out of society—but the system doesn’t want that. The system wants engagement—feedback and data—so it will try to make us happy to keep us going with it. Whatever we might think of Harari, his assessment is accurate. Yes, he does repeatedly refer to brain-altering techniques (electrical and medicinal) specifically in regards to how they can be used to help soldiers kill better with less guilt afterwards (so they can then go kill even more). And yes this is unseemly. But this is, quite seriously, an obvious point of interest for these sciences and technologies as far as the system itself would be concerned. Despite its frivolities and comforts, it is, at heart, a fundamentally anti-human machine.

Superman trips on his cape

Of course, Harari believes that the select few—the specialists of the specialists—will access new medicines and technologies and thereby become a new species, Homo deus, as homo sapiens becomes outmoded. The elite will access life-extension, will have the fastest microchips augmenting their brains, will attain super strength and complete mastery over their emotions. And the research toward these abilities is already well underway. The book and the examples in it may be fairly recent, but the idea itself is old, the dream of the ages, preceding even Faust and Frankenstein: To cheat death and become gods!

Aside from divine intervention, the only conceivable flaw is this: That the elite themselves, like so many of the people beneath them—specialist and pleb alike—will become so dysfunctional that they will mismanage their ascension.

Think of the human population as a pyramid. The bottom layers are “useless eaters” as those above might call them. The middle layers are the specialists and white-collar engineers. And at the top layer we find the rulers, the elite—Schwab, Harari, Gates, etc. A larger lower base is required for there to be a pyramid in the first place. The technological and scientific progression of the last century-plus, however, has been altering the societal physics. Those on the higher levels find that they simply do not need so many underlings. Technology can do the work of proles, and in fact it can do the work of more and more proles all the time. And now with computer learning we find that fewer and fewer mid-level bureaucrats are necessary either. Quite taken by the idea of population reduction, some of the elite may well believe that few if anyone needs to remain beneath them. They have faith that technology will soon allow the very top of the pyramid to float on its own, without any dirty humanoid subspecies supporting it. But other elites worry, quite rightly, that actually a system with artificial intelligence may not need any humans at all, not even them, no matter how impressive these would-be supermen may consider themselves to be.

At the current moment, those near the top of the pyramid may not care about local farming or the manufacture of family-friendly automobiles—but nonetheless we are still in a vaguely pyramidal system in which the lower levels matter. The elite are relying on the most advanced scientists and engineers to build a posthuman future in which they can become homo deus. The most advanced programs and work projects rely on subprograms and subprojects. All the way down the scale, each level of research tends to rely on the levels beneath it for reliable data, for underlying premises and specifics, and sometimes even for funding. If we could survey the entire hierarchy of all research being done on the planet, the very top, most-science-fictiony projects would be the concern of the very few, but before too far down the ladder we would find projects that tie into the interests of entire nations and populations: gas pipelines, vaccines, national defense, etc. The tiny elite have a handful of primary goals (life-extension, superhuman biology, mental maximization, DNA editing), but work in those directions depends on a huge interconnected structure.

The elite rely on the specialists, the specialists rely on the not-no-specialists, and the not-so-specialists rely on the plebs unclogging their toilets for them. Any stress on any level of the system—from Elon Musk getting an accusatory text from his estranged child, to a lower-level engineer having a clogged drain for two weeks—can have a ripple effect through the entire human chain. Musk then irresponsibly dumps some stock, causing others’ portfolios to plummet. Or the constipated engineer who can’t take a crap at home makes a mistake while working on a mass-market project.

All these ripples produce further dysfunctions. Never before has humanity been so interconnected. Thus never before have we all been susceptible to others’ stresses and the aftereffects of any and all stress. Within the civilizational breakdown that is currently taking place—and the decline is obvious to nearly everyone by now—we see mass dysfunction on display, and the overall trendline seems to show that the level of dysfunction tends to keep rising. How could it not keep rising, when we are all linked so closely now that any dysfunction anywhere disrupts other nodes? Think not only of supply-chain disturbances but also of mental contagions, digital addictions, contaminated monetary pools.

Harari believes drugs and electroshock techniques will be able to dampen depression and anxiety in such successful fashion that these conditions will be virtually eliminated. I don’t believe him, but for the sake of argument—fine, put these ailments (which are going to the moon, by the way) completely aside. Consider only dysfunction and you will find the weak point of the overall plan.

A few times on my podcast I have speculated that the biggest point of contention will be whether or not the system can breed artificial humans before the natural human infrastructure collapses under the escalating stress. Harari’s book touches on these matters: breakthroughs in DNA alteration are championed, a picture of in vitro fertilization precedes page one. Artificial wombs will be seen as freeing women from the unfair duty of bearing children. When and if it happens, there will be designer babies tailormade to serve and expand the system that created them. The potential to rebel (or even meaningfully resist) will be edited out or turned off permanently at the root biological level. These future children will be pure algorithm or very close to it. You see, the “NPC” masses you complain about—the system considers even these people to have retained far too much free will and unpredictability.

But all the dysfunction you see around you that annoys you so much? Get ready for a shocker: Maybe it’s a good thing. The dysfunction signifies that maybe the bloodless ones won’t win, that the crystal palace won’t emerge. To save the world, before it’s too late, maybe as many of us as possible will need to turn into a bunch of complete fuck-ups. This would not be any sort of “accelerationism” or deliberate sabotage but rather the natural consequence of an unnatural situation. Who knows what would happen afterwards, but for scientific totalitarianism to be averted people need to start screwing up. Luckily, that is in fact what seems to be happening—at the bottom and especially at the top.

In screwups… salvation

To summarize: The pressures of the system are outstripping the system’s capacity to mitigate them, particularly on the psychological level. The system continues to become better and better at drugging the increasingly frenzied population, but the ever-more-artificial environment causes escalating dysfunction that will reach a breaking point before the human race can be rendered obsolete. This threatens if not the entire infrastructure then at least the time and research needed for the elite to become superhumans in any sort of remotely orderly or sustainable fashion.

The elite themselves are not immune from the disorienting and distracting effects of the technological society. They are losing their heads as the situation intensifies; they are screwing up more and more often. We see evidence of this in the news constantly. We even see evidence of dysfunction in Harari’s book.

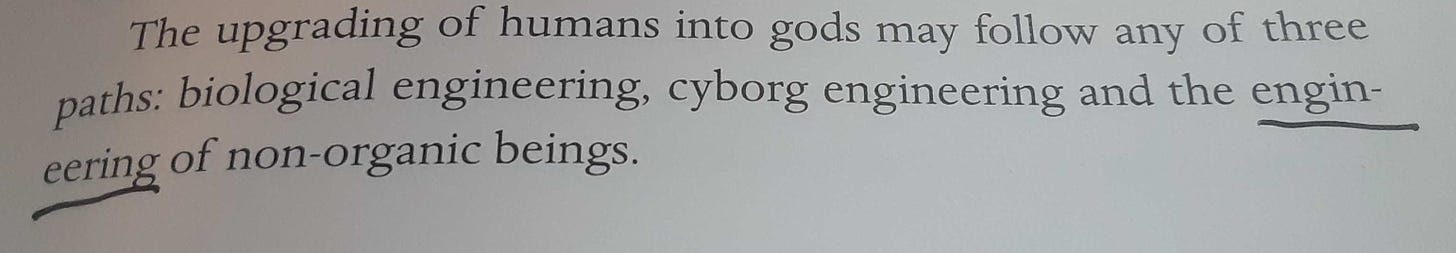

On page 43 of Homo Deus, Harari writes that “The upgrading of humans into gods may follow any of three paths: biological engineering, cyborg engineering and the engineering of non-organic beings.” On the printed page there is a line-break midway through engineering, and it is hyphenated as follows:

The correct hyphenation would be “engi-neering”—the n forms a syllable with -eer, not gi-.

This is, admittedly, a minor error, and it is the only one I noticed in the book’s 400+ pages. Still, it is significant for the paperback edition of a big #1 New York Times Bestseller to contain a typo. A number of professional editors must have missed it. Evidently no readers of the previous hardcover edition (which also contained the slip-up) alerted the author or the publisher. Possibly they did not even register “engi-neering” as incorrect hyphenation. Whatever spellchecker programs passed over the text also missed the error—and quite likely an automatic linebreaker app caused it in the first place. Whatever happened exactly, all the vaunted algorithms—technological and biological—failed, leaving the major work of a public intellectual stained.

I feel confident in suggesting that a decade or two ago this would not have happened. Back then, human editors knew how to hyphenate. Most readers knew proper syllabification. Someone would have noticed.

Homo Deus was first published in 2016; the paperback was published in 2018. In 2020 Harper Perennial released a boxed edition of Homo Deus that, as noted above, includes a small volume of Harari’s recent essays, all of which initially served as articles in major publications (The Guardian, the Financial Times, and the New York Times). These writings, all composed and released in 2020, pontificate about COVID-19 and (to a lesser extent) the U.S. presidential election of that year. This slim volume of Bonus Content contains only 24 pages, and yet there are more errors in this text than in the entirety of Homo Deus.

On page 4 of the supplement Harari compares saintly virus-fighting scientists to superheroes, specifically to “Spiderman and Wonder Woman”. Needless to say, as billions of fans should know, Spider-Man is hyphenated, and the M capitalized. Harari didn’t know this, nor did the editors at The Guardian, nor the Harper editors who republished the article. None of the proofreaders or spellcheckers caught the typo.—Perhaps I am being pedantic, but Spider-Man is very possibly the most popular fictional character in the world right now. Yuval Noah Harari is supposed to have a grasp on humanity. The Guardian and Harpers are supposed to be professional, trustworthy, intellectual. All of these sources keep bemoaning the lack of trust that the masses have for the media, for “the experts”, and for institutions of supposedly high culture. Um… These people can’t even spell Spider-Man right. They never checked themselves, and they can’t even be bothered to correct the error after years. The Guardian’s online archive of Harari’s text still maintains the misspelling.

The next essay, “The World after Coronavirus”, also contains an anomaly. On page 13 we read that “In the days ahead, each one of us should choose to trust scientific data and healthcare experts over unfounded conspiracy theories and self-serving politicians.” A page later the same sentence reappears, verbatim. Why would Harari rewrite the same exact statement in the space of a few paragraphs? The article originally appeared in the Financial Times and a quick check of the online version solves the mystery. On the webpage at ft.com this sentence indeed appears twice: once in its proper place in the main text, and then again as a pull-quote. When transposing the article to print, a Harper Perennial employee evidently highlighted everything—pull-quote too—copied, and pasted it all into a new document. Though the pull-quote was very much set aside, and had a font that was bolder and different from the main text, in the Harpers file it became just another sentence. Then, either there was no proofreading before republication, or the proofreader(s) didn’t even notice the strangeness of repeating sentences in close proximity.—Either way, how can we trust when virtually everyone now is such a colossal fuck-up? The data is often corrupted. The texts are altered. The technology that is supposed to help and make things easier often eventuates in more errors. Copying and pasting from a webpage is easier than rewriting manually, but look what it resulted in—a blunder in the exact sentence in which Harari stresses trust. And no one caught it.

So, again: This 24-page pamphlet, which was overseen by more editors across more platforms, has more errors in 24 pages than Homo Deus had in 400 pages just two years earlier. People are losing it. The professionals are losing it. The demands that everyone just trust them seem more and more comical.

But—yeah, yeah—I get it. Everyone feels more overtaxed and more overstressed. Everyone makes mistakes, including these overlapping nexuses of professional smarty-pantses. Maybe they all need even more antidepressants and anti-ADHD medications. Whatever they need, whatever they get, it never seems to be enough. They want to be gods, though, or their bosses do, and yet the mistakes they’re making seem more and more prevalent. They seem more and more openly crazy and obviously dysfunctional. We all screw up, but they’re screwing up more, more and more all the time.

Good.

Works Cited

Harari, Yuval Noah. Homo Deus: A Brief History of Tomorrow. New York: Harper Perennial, (2016) 2018.

——. Bonus Content for the Paperback Boxed Edition of Sapiens/Homo Deus. Pamphlet. New York: HarperCollins, 2020.

——. “Yuval Noah Harari: the world after coronavirus.” Online. https://www.ft.com/content/19d90308-6858-11ea-a3c9-1fe6fedcca75.

——. “Yuval Noah Harari: ‘Will coronavirus change our attitudes to death? Quite the opposite’.” Online. https://www.theguardian.com/books/2020/apr/20/yuval-noah-harari-will-coronavirus-change-our-attitudes-to-death-quite-the-opposite.

Well don